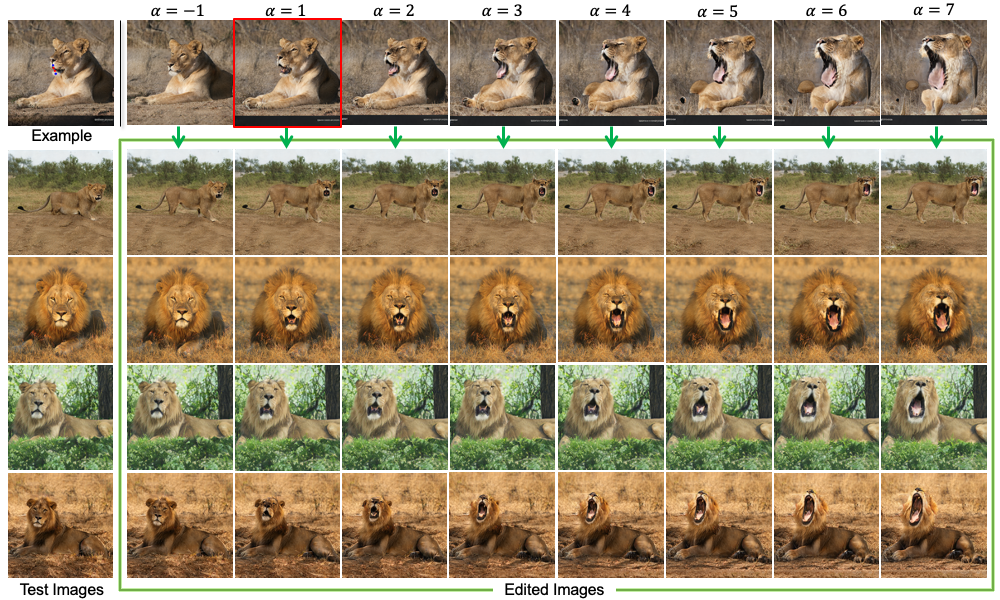

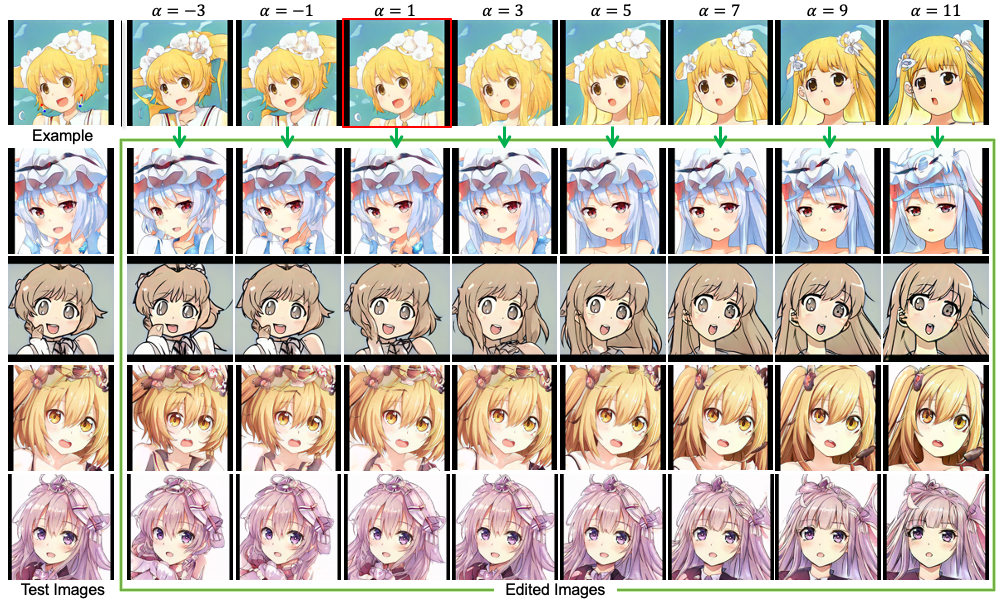

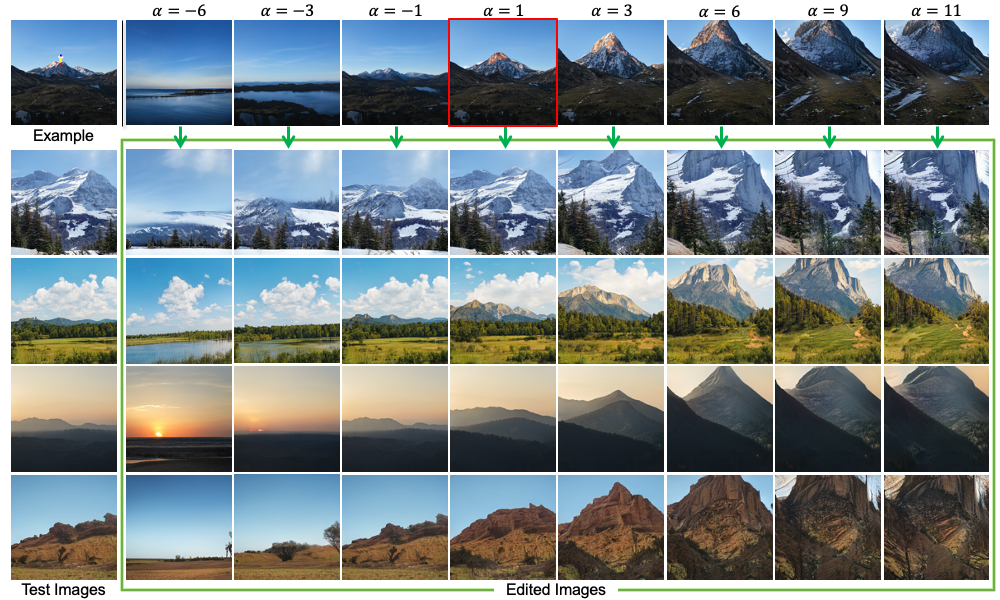

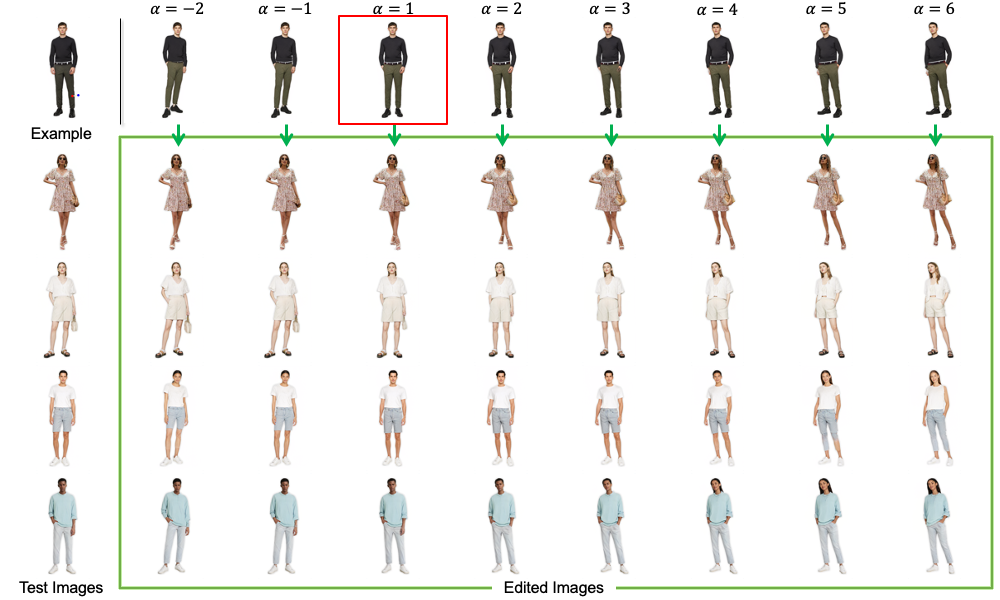

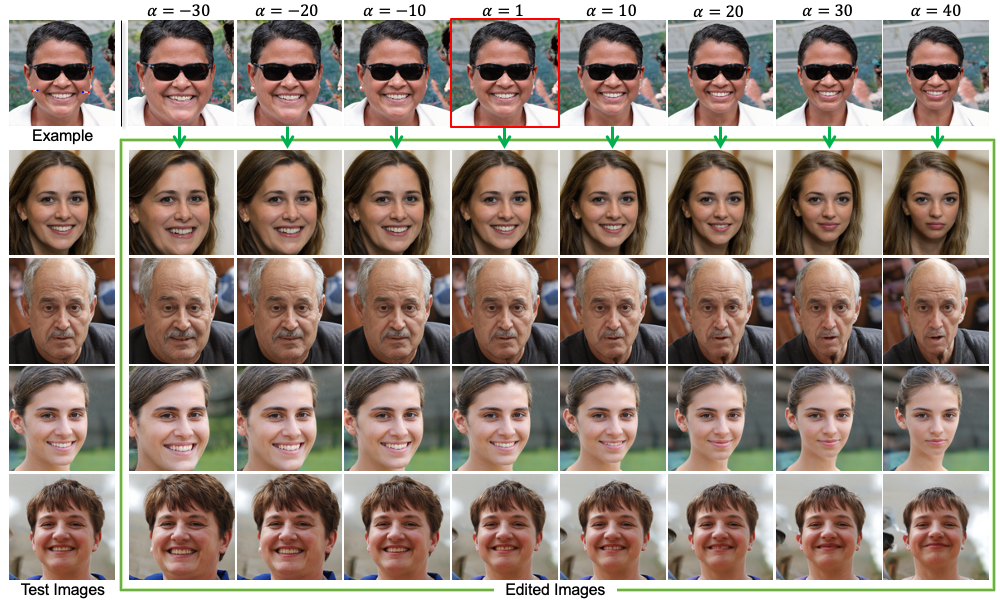

Given an edit specified by users in an example image (e.g., dog pose),

Our method can automatically transfer that edit to other test images (e.g., all dog same pose).

|

|

|

|

|

|

|

|

|

|

|

|

Given an edit specified by users in an example image (e.g., dog pose),

Our method can automatically transfer that edit to other test images (e.g., all dog same pose).

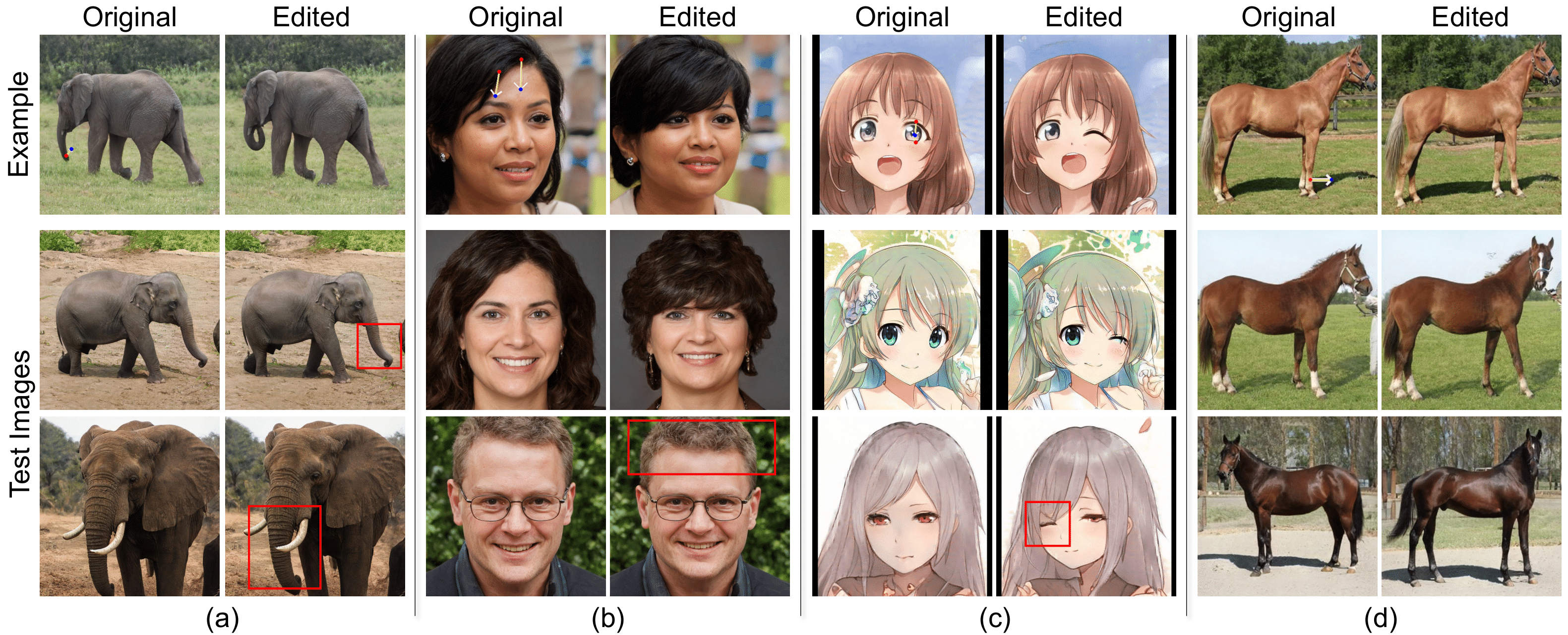

| In recent years, image editing has advanced remarkably. With increased human control, it is now possible to edit an image in a plethora of ways; from specifying in text what we want to change, to straight up dragging the contents of the image in an interactive point-based manner. However, most of the focus has remained on editing single images at a time. Whether and how we can simultaneously edit large batches of images has remained understudied. With the goal of minimizing human supervision in the editing process, this paper presents a novel method for interactive batch image editing using StyleGAN as the medium. Given an edit specified by users in an example image (e.g., make the face frontal), our method can automatically transfer that edit to other test images, so that regardless of their initial state (pose), they all arrive at the same final state (e.g., all facing front). Extensive experiments demonstrate that edits performed using our method have similar visual quality to existing single-image-editing methods, while having more visual consistency and saving significant time and human effort. |

|

|

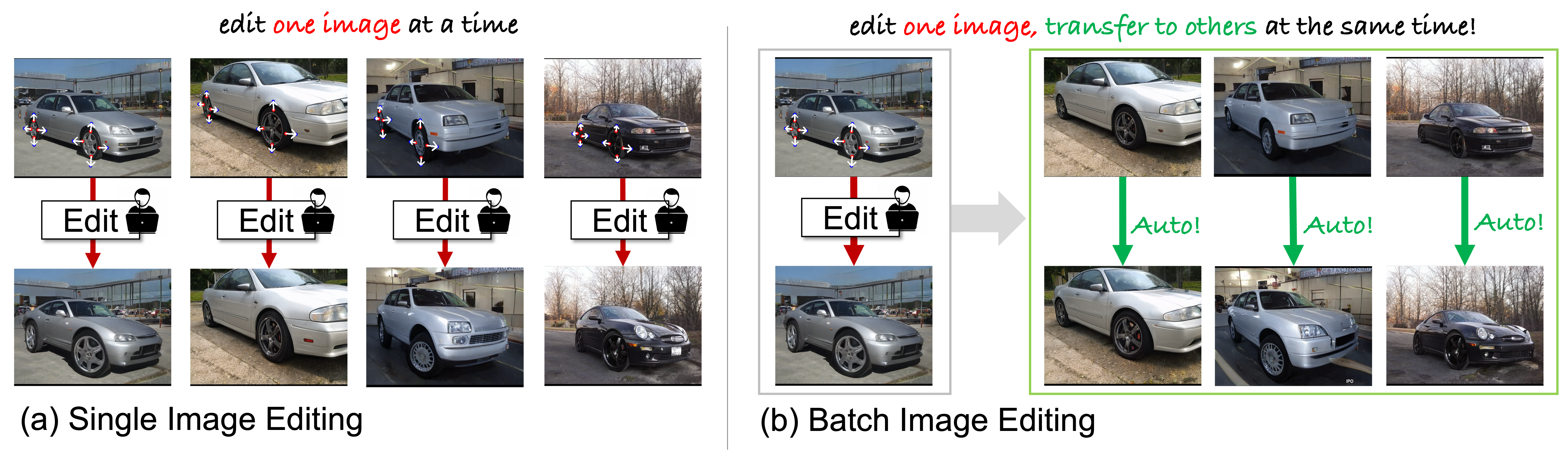

Single Image Editing vs. Batch Image Editing.

(a) Prior work focuses on single image editing. (b) We focus on batch image editing, where the user’s edit on a single image is automatically transferred to new images, so that they all arrive at the same final state regardless of their initial starting state. |

| ➥ BibTeX: |

|

@inproceedings{nguyen2024edit, title={Edit One for All: Interactive Batch Image Editing}, author={Thao Nguyen and Utkarsh Ojha and Yuheng Li and Haotian Liu and Yong Jae Lee}, year={2024}, eprint={2401.10219}, archivePrefix={arXiv}, primaryClass={cs.CV} } |

Acknowledgements |